We take your Privacy very seriously.

MX Study: Synthesizer Basics with the MX

The MX49 and MX61 are based on the same synthesis engine as the Motif XF and MOXF series of Yamaha Music Production Synthesizers. And while it is definitely the ‘little brother’ of the line, they are extremely powerful. Rather than reducing the sound making capability, Yamaha designed the MX to function as an extremely mobile version of its bigger siblings. So while you do not have all of the features found in the larger, more expensive units, you can, to an uncanny degree, replicate the sounds that have made these Synthesizers top of their class in the marketplace.

Many of the deeper Voice programming functions are only available via an external Editor. And while the MX can perform many of the magical things you hear in the Motif XF and MOXF, you will only be able to program these with an external computer EDITOR. You can then STORE the Voice to the MX’s internal memory and play it anytime you like. By this I mean, once stored, the Voice is completely playable even when not connected to the computer editor. You can power down the computer and take it with you just like any other VOICE in the MX!

We will take a look at the basic concept of building a sound using the sample-playback engine found in all of these synthesizers. (At the bottom of this article – please find a ZIPPED Download with 5 VOICE examples discussed in the article). A brief look at the history of synthesizers is a good place to start. In the 1960’s experimentation with “voltage controlled” analog synthesizers was limited to College and University labratories. But by the early 1970s the first of the commercially “affordable” monophonic and duophonic synthesizers started to hit the market. These had a few geometric waveforms (sine, saw, pulse, etc) as sound sources. Typically you had a buzzy bright Sawtooth waveform and an adjustable width Pulse waveform. The buzzy Sawtooth (and reverse Sawtooth, sometimes called Saw Up and Saw Down) was used to create everything from strings to brass sounds, while the adjustable width Pulse waveform was used to make hollow sounds (clarinet/square wave) through to nasal tones (oboe, clavinet/narrow pulse).

Analog Synthesizer Background

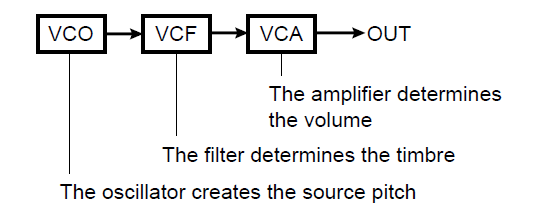

The fundamental building blocks in an analog synth are the sound source – the tone/timbre adjustment – the amplification.

The VCO or Voltage Controlled Oscillator was responsible for creating the musical pitch.

The VCO or Voltage Controlled Oscillator was responsible for creating the musical pitch.

The VCF or Voltage Controlled Filter was responsbile for shaping the tone/timbre of the sound.

The VCA or Voltage Controlled Amplifier was responsible for shaping and controlling the loudness.

This is also the basic synthesizer model that sample playback synthesizers are based on – with the significant difference that instead of shaping a handful (literally) of source waveforms into emulative sounds with voltage control, the sample synthesizer has an enormous library of highly accurate digital recordings of the actual instrument being emulated.

Without getting into a discussion of what can and cannot be replicated by samples versus analog voltage control, and other very inflammed subjects, let’s take a look at the yet unexplored possibilities that today’s sample playback engines offer. Particularly since the synth engine today has far more variety and many more directions you can explore. (If you only look back, you may only see the past… or something like that).

In most of the early analog synthesizer the oscillators, no matter how many, all shared the same filter and the same amplifier so that sounds had a specific type of movement. As we’ll see, in the sample playback engine here, you have 8 oscillators (Elements) each being a complete Oscillator-Filter-Amplifier building block. This “times 8” means you have a very flexible tone generating system that can be used to create extremely complex and detailed musical timbres.

SAMPLE PLAYBACK SYNTHESIS

SAMPLE PLAYBACK SYNTHESIS

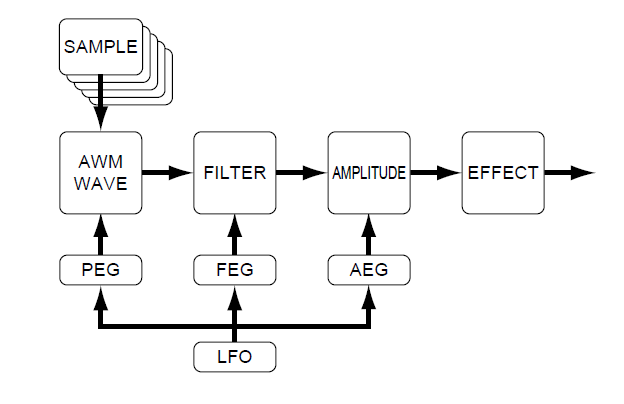

The block diagram of the current Yamaha AWM2 (sample playback engine) is shown here. The significance of having a digital audio recording (sample) as the sound source is one that does affect the results. Naturally, if you start with a waveform that is an audio copy of the real thing, you can use the Filter and Amplitude blocks to refine the results and therefore, you can rely on it less to create the emulative resulting tone. Because a digital recording is a fixed entity, samples do work best on triggered-event musical instruments (percussion family).

Instruments that can sustain and be played with varying length notes, present a unique problem for digital recording based syntheszers. Samples are most often looped, not, as many believe, simply to save memory so much as to allow the keyboardist to intuitively control the destiny of the note-on event (note-length). In other words, if you are playing a flute sample, if the original player only held the note 8 seconds then you, as a keyboardist, would never be able to hold a note longer than that. (If you remember the early history of audio recordings being used in keyboards: the Mellotron had magnetic tape strips that engaged when you pressed a key, but you were limited to the length of that recording). Also, if you decide to play a note shorter than the original player, you need the envelope to move to an end strategy (Release) whenever you decide to lift your finger. Looping is a necessary evil in sample engines – it allows the keyboard to be used as an emulative instrument. Percussion instruments, like drum hits. are simple – they typically play their entire envelope without the necessity of looping (there are exceptions, the acoustic pianoforte being one of them).

PEG, FEG, AEG and the LFO

Pitch, Filter and Amplitude are the pillars upon which the synthesizer is built. The fundamental structure again follows the paradigm of the analog synth engine. The Envelope Generator (EG) is a device that is responsible for how things change over time: the envelope “shape” determines how it comes in at key-on, what it does while it sustains, and how it disappears at key-off. For example, some instruments can scoop up to a pitch or drop-off of a pitch – this would be a job for the Pitch Envelope Generator. Some instruments change timbre and loudness as the note is held, for example, a slowly increasing trumpet note (sforzando) will need both a Filter Envelope and Amplitude Envelope to create the shape of the sound.

The Low Frequency Oscillator (or LFO) is literally responsible for outputting frequencies that are sub-audio. We can hear musical tones between the frequencies of 20 cycles per second and 20,000 cycles per second. Above 20,000 we know that dogs can hear it, but did you know that you do not recognize sounds below 20 cycles per second as a cohesive sound. The sound breaks up into separate events, spaced evenly. These are used as “rates”. We can apply a change to a sound one or two times every second. Or even change (called “modulate”) the sound five or ten times every second. These “low” speed oscillations can be applied to the Oscillator block (pitch), to the Filter block (timbre) and/or to the Amplitude block (loudness). When a rate of modulation is applied to pitch we musicians refer to that as vibrato. When a rate of modulation is applied to a filter we call that wah-wah. When a rate of modulation is applied to the amplifier we call that tremolo.

Pitch Modulation Depth – it sounds so scientific. But as musicians we understand this as a musical gesture called vibrato. As piano players, we are percussionist and therefore, we do not encounter this musical gesture in our vocabulary of terms. But think of any stringed instrument – a violin or an electric guitar. The oscillator is a plucked or bowed string. Once in motion it vibrates (oscillates) at a specific frequency (musical note). The pitch is determined by the length of string that is allowed to freely oscillate – from the fret being fingered and the bridge. The player shortens that distance the pitch goes higher, you lengthen that distance the pitch goes lower. Vibrato is the action of rhythmically varying the pitch sharper and flatter by moving the fretting finger up and down the string. Pitch Modulation Depth = Vibrato.

Filter Modulation Depth – again scientific. Here’s your breakdown: A Filter, you know what a filter does. A Coffee filter keeps the grounds from getting into the part of the beverage that you drink. A Filter removes certain ‘unwanted’ items and discards them. A musical Filter does this to the harmonics in a sound. As musicians we are at least familar with harmonics even if we cannot give a text book definition. Harmonics are what we as humans use to recognize sounds. It is our identifying process that can tell very minute differences in the harmonics within any sound. The text book definition will describe harmonics as the whole integer multiples of the fundamental pitch. If you pluck a guitar string, say the open “A” string; it will oscillate at precisely 110 cycles in a second. A cycle is one complete journey of a Wave. A sine wave starts a 0 and reaches a maximum 1/4 of the way through its cycle; at the 1/2 point the amplitude returns to the 0 line; at the 3/4 point it reaches the deepest minimum opposite the maximum; and finally it returns to the 0 line as one complete cycle. When the string is creating a pitch of 110 cycles you can imagine that a very high-speed camera could capture a picture of 110 of these complete cycles. But you would find a certain number of pictures where the string contorts and as the wave bounces back from the bridge you capture a picture where 220 cycles appear. And 330, 440, 550, 660 cycles, and so on. The number of pictures per second that would be 220 would be some what less than the number of perfect 110s, and the number of 330 would less still, and even less showing 440… these whole integer multiples of the original pitch 110, are the harmonics. When you match the harmonic content of a sound – the ear begins to believe it is hearing that sound. What a vocal impressionist does is attempt to mimic the harmonic content of the person they are trying to imitate. Hamonics are like the fingerprint of the sound. They are why we have no problem telling a Trumpet playing A440 and a Trombone playing A440 – Both are brass instruments and made from similar design. However, even when playing the same exact pitch, the loudness of each “upper” harmonics creates a unique and identifiable tone (timbre) and when we hear it we have no problem recognizing it. The job of a filter is to alter either upper or lower harmonics to mimic the instrument being emulated. A Low Pass Filter (LPF) allows low frequency to be hear and block high frequencies. The LPF is the most used on musical instrument emulations for a good reason: The harder you strike, pluck, hammer, blow through, or bow a musical instrument the richer it becomes in harmonics. So the Low Pass Filter mimics this behavior – often a LPF is used to restrict the brightness of a sound so that as we play harder, the sound gets brighter – this is true in nature. When you change the tone or timbre of a musical instrument you are playing with the harmonic content. The musical gesture is commonly referred to as “wah-wah”. And contrary to popular belief it was not developed initially as a guitar pedal. Wah-wah as a term predates the guitar pedal – you can imagine some trumpet player or trombone player with a mute or plumber’s plunger using it for comic effect in the jazz bands of the 1920-40’s. The act of covering and removing your hand from your mouth can create a wah-wah sound – as you block high frequencies and then release them by removing your hand, rhythmically; you are applying Filter Modulation Depth.

Amplitude Modulation Depth – A amplifier is designed to either increase or decrease signal. Yes, I said it: “…and decrease signal.” Changing the volume of the musical tone by increasing and decreasing the volume – again, as piano players we do not encounter this gesture – but there are instruments that can easily to this by increasing pressure and decreasing pressure being applied to the instrument. Tremolo is the musical term and usually a string orchestra executing a tremolo phrase comes to mind – or again the term is used on guitar amplifiers where rhythmically the volume is pulsed up and down. Amplitude Modulation Depth = tremolo. Once you start breaking down synth terminology with what you already know as a musician you will find that all of this gets much easier.

If the synthesizer were only doing piano you can see that Pitch Modulation, Filter Modulation and Amplitude Modulation do not get called upon much at all. We should mention here that with analog synthesizers you had to use these devices to help build the sound you were emulating. But in the world of the sampled audio Oscillator – we can opt to record the real thing. And in some cases that is exactly what you will find. There are examples – and we will see these in future articles – where these musical gestures are recorded into the sample and we’ll also find examples of when they are created by the synth engine applying an LFO to control the modulation.

The way that sample-playback synthesizers and the way that analog synthesizers function is based on the same basic block diagram – the biggest difference, obviously, is in the oscillator sound source area. Rather than being based on an electronic circuit that generated pitched tones in response to precise voltage changes, the pitched sound is provided by a digital recording set to play across the range of MIDI. This basic block diagram works for recreating instrument sounds – it is, not the only way to synthesize, but it survives because it works fairly well. In every acoustic instrument you have the same building blocks – a tone source (something providing the vibration/oscillation); the instrument itself is the filter (the shape and material used to build the instrument affects its tone); the amplifier can be the musicians lungs, cheeks, diaphram, it can bellows, attached resonant chamber, etc., etc. It is not a perfect system and it is not the only system, it is one of the “state-of-the-art” systems that developed to fill our needs.

Sawtooth Waveform Example

We’ll take a simple Waveform, the Sawtooth, and show you how it is shaped into Strings, Brass, and Synth Lead sounds using the basic synthesis engine. Through exploration this will show you how this same Waveform can used to build different sounds. Back in the early days of synths – I’m talking when MiniMoogs and ARP Odysseys roamed the Earth – there were really only two types of synth sounds: “Lead” sounds and “Bass” sounds. If you played high on the keyboard, it was called a Synth Lead sound, and if you played principally below middle “C”, it was called a Synth Bass sound!

Oh, there were booklets that showed you how to set the knobs or sliders to create a flute or oboe or clarinet or what have you, but at the end of the day, most people listening to you would walk up and ask: what that sound was ‘supposed’ to be. It makes me smile now at how close we thought we were with some of those sounds. But it can be argued that the reason that the analog synthesizer went away (ultimately) had to do with the demand for more and more realistic emulations of acoustic instruments (and polyphony was too expensive to acheive with just analog as a source). Now it is not that every person wanted this, but this is what (looking back) drove the direction of evolution. There are synthesizer sounds that are just synth sounds – and that’s cool. Some sounds are not trying to ‘be something else’.

We should mention, there are some things that can be done on an analog synthesizer, or a real saxophone that cannot be duplicated by sample playback instruments in the same way. That is always going to be true, whether emulating real or synthesized instruments – because you cannot manipulate a recording of some things in the same way you can manipulate the real thing. And for those things nothing will replace the actual instrument. But to consider that this is some kind of “limitation” is to give up without even exploring what NEW avenues are now open. And that would be just silly. There are a number of things you can do now, we daren’t even dream about back then! And that is what is for you to explore…

Let’s start by exploring a group of very different sounding VOICEs made from the same source Waveform “P5: SawDown 0 dg”

Used in the VOICE: “Soft RnB” (This is Prophet V sampled Sawtooth Down wave; the 0 degrees refers to the phase of the stored waveform). For those not around then – the Prophet V (the “V” is a Roman numeral for 5) was one of the popular polyphonic synthesizers of the day… a big five note polyphony; priced at about one thousand dollars a note (as we used to say…) it opened the door to popularizing synthesizers in the early 1980’s. The golden age of synthesis was about to begin. Sawtooth waveforms come in two varieties; Sawtooth Up ramps up in amplitude and drops immediately, while Sawtooth Down starts at maximum amplitude and fades out.

In general, the Sawtooth Waveform is a very familiar ‘analog’ sound – this Synth Lead Voice is typical of the type heard extensively in the 1970s and 80s. And it is a good sonic place to start so you can see/hear how it is based on the same waveform that builds synth strings and synth brass sounds.

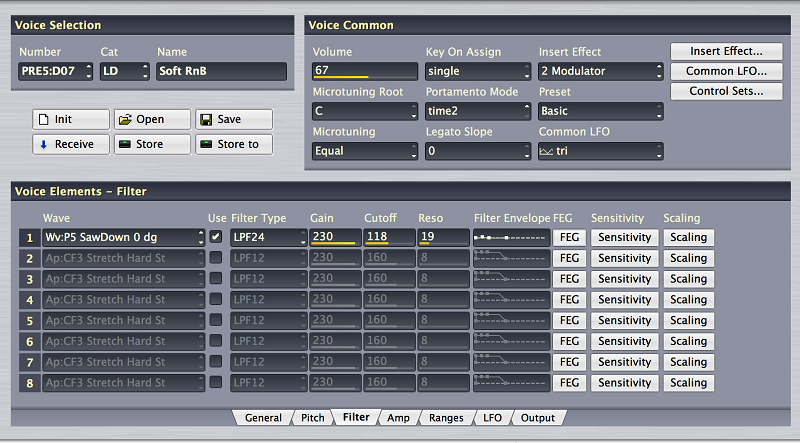

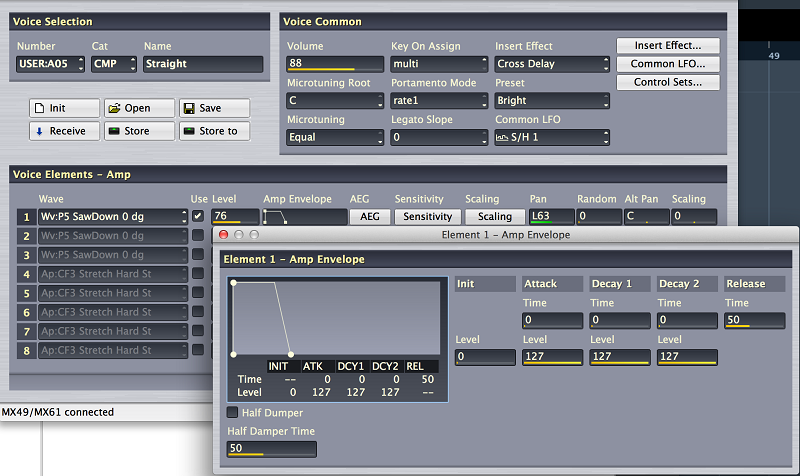

Shown above is the main Voice Editor screen in the John Melas Voice Editor.

The VOICE being shown is “Soft RnB”

Press [SHIFT] + [SELECT] to execute a QUICK RESET

Press button [9] to select the SYN LEAD cateogy

Advance to LD: 018 Soft RnB

EXPERIMENT 1: Frequency Experiement

Take a few minutes and explore playing this sound. Play across all octaves, experiment with the KNOB CONTROL FUNCTIONS, etc. Try raising the CUTOFF FREQUENCY (turn the Cutoff knob clockwise from 12 o’clock). The 12 o’clock position will always restore this parameter to the ‘stored’ value for the filters. For this experiment set the CUTOFF Knob to about 3 o’clock. This will give us a very bright and buzzy sawtooth sound.

Play the “A” above middle “C” (A440), play each “A” going lower, play each of these notes in turn: A220, A110, A55, A27.5 (this is the lowest ‘A’ on the acoustic piano, “A-1”) using the OCT DOWN – go one lower and play that “A” at A13.75 … Notice how the sound is no longer the bright and buzzy Sawtooth Wave but has broken down to a series of CLICKS – you can almost count them – there are thirteen and three-quarter of them every second (by the way you cannot go any lower on the “A”s than A13.75 – the lowest MIDI note is C-2 (frequency is approximately 8.176 cycles per second), below that the frequency loops around). When it is said that you cannot hear below 20 cycles per second, you simply do not recognize it as a contiguous (musical) tone. You have reached the edge of that sense of perception. As you chromatically play up from A13.75 you will hear the clicks get closer and closer. And very much like if you were placing dots on a piece of paper closer and closer together, soon your eye will not see them as separate dots but will perceive them as a continuous line. When these audible clicks get close enough you start to hear it as a musical tone (some where around about 20 times per second). You know this – or have at least heard about this! …probably from science class in grade school. Anything that vibrates (oscillates) at precisely 440 times a second will give off the pitch “A” and the frequency range of the human ear is approximately 20 to 20,000 cycles per second. (Just FYI: the highest note on the piano is C7 which is 4,186.009 cycles per second). Quick math should tell you that we can hear about 2 octaves and change above that highest “C”.

We are looking at a simple one Element Voice. It uses a Waveform from the geometric Wave category “Wv:P5 SawDown 0dg”. This is a Prophet V SawTooth Down sample – the “0 dg” is the phase orientation of the source sample. This can account for subtle movement within the Voice, by simply combining two Waveforms with different phase relationship, without necessarily “detuning” the oscillator. The understanding of “Phase” will take you back to your grade school days (when you actually knew all of this stuff, sine, cosine, remember?). We’ll come back to this later.

The fact that we are starting with a single oscillator is going to be important because – each Element can be a complete instrument or it can be a building block within an instrument. But it has its own complete signal path, its own Filter and Filter EG, its own Amplifier and Amplitude EG, its own LFO, etc. It is a complete synth all by itself!!!

Experiment 2: Exploring the Voice parameters

Back in the day the Sawtooth waveform was used to do strings and brass Voices. When I worked as an audio engineer, in the studio we had cause to splice tape. If you have a recording of a string section holding a long chord, and a separate recording of a brass section holding a long chord, it is very difficult to tell the two apart if you cut off the ATTACK and the RELEASE portions – allowing someone to hear only the sustained segment. We would edit those portions of tape and just play the chord without its natural attack segment and without its natural decay segment. It is at that point that your ear/brain realizes that the two timbres are very, very similar. It is the very important ENVELOPE (that shapes the sound overtime) that helps you recognize what you are hearing. Much like an optical illusion where they show the same line with two different backgrounds and clearly one is bigger than the other, I mean, clearly… then they remove the background and you cannot believe that now, in fact the two lines are, indeed, the same length. This is an “aural illusion” that until you experience the timbre without its Attack and Release – you’d swear they were very different tones, from different waveforms.

Let’s take a look at the FILTER – the Filter determines how dark or bright a sound is. It does this by altering the balance of musical harmonics. Each musical tone has a unique ‘fingerprint’ which is created by the volume levels of each successively higher Harmonic. As musicians we should have a fundamental working knowledge of Harmonics. These are the whole integer multiples of the Fundamental Frequency. By allowing or filtering out a specific frequency range the Filter causes us to hear the balance of the upper harmonics differently. And that is how a synthesizer can hope to “fool” the listener – by not only mimicking the harmonic content, but the attack, sustain, and release characteristics of the instrument in question.

What is CUTOFF FREQUENCY? – This is a Low Pass Filter (LPF24 is a 24dB/per Octave filter) – one that allows Low Frequencies to pass and blocks high frequencies. The Cutoff Frequency is the point along the range of all frequencies where this filter is told to begin FILTERING OUT upper harmonics. And from that point to an octave above the level will have decreased by 24dB (very steep dropoff in volume above that Cutoff Frequency). By raising this Cutoff Frequency, the sound will immediately begin to brighten (we are allowing more high frequencies to pass through). Move the Cutoff Frequency and observe. If you click on the FEG box on Element 1, (shown below) you will get a graphic that will help you visualize exactly what is happening.

Please raise the Cutoff paramter to 240 – hear how this affects your perception of the sawtooth waveform

Let’s take a look at the AEG (Amplitude Envelope Generator) – which determines how the sound is shaped in terms of LOUDNESS. How it starts (attack), continues (sustain) and disappears (release). The synth lead is structured as follows:

Click on the “AMP” tab along the bottom of the main VOICE screen and then click on the “AEG” button to view the graphic of the Amplitude Envelope.

AEG (Amplitude Envelope Generator)

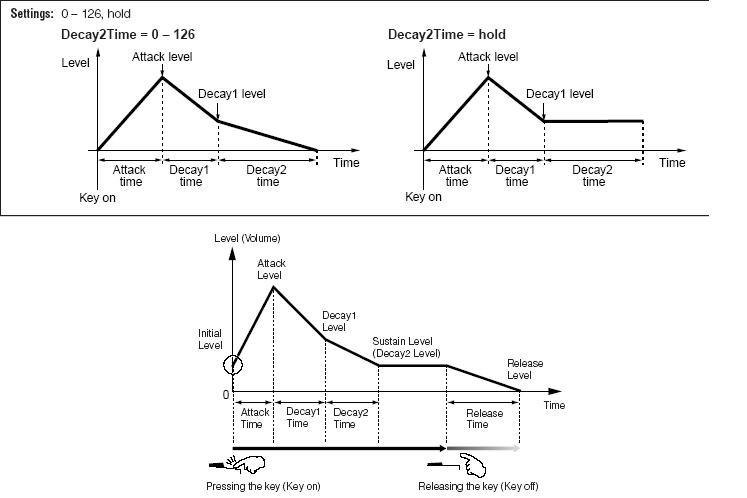

The AEG (Amplitude Envelope Generator) consists of the following components:

- Initial Level

- Attack Time

- Attack Level

- Decay 1 Time

- Decay 1 Level

- Decay 2 Time

- Decay 2 Level

- Release Time

These are the parameters that the synth uses to describe what in old analog synthesizers was referred to as the ADSR (Attack-Decay-Sustain-Release). It uses a series of TIME and LEVEL settings to describe the loudness shape of the sound.

In the first screenshot below you see a graphic that describes a DRUM or PERCUSSION envelope. In the second screenshot, you see a graphic where the DECAY 2 TIME is set to 127 or HOLD – the envelope is set to play indefinitely*

In the third screenshot, you see a typical musical (Non-Drum/Percussion) instrument described.

If DECAY 2 LEVEL is 0, you have a percussive sound. If DECAY 2 LEVEL is not 0, you have a sound that will be dependent on KEY-OFF to begin the RELEASE portion… to return to a level of 0. We will emphasize this point Because it is a key to understanding musical behavior. TIME can be understood as how long it takes to move from LEVEL to LEVEL. If DECAY 2 LEVEL is anything but 0, the sound will never go away while a key is held… Eventually as long as a key is held the level will reach the value as set by Decay2Level. If it is 0, then the sound will die out, eventually.

By the way: RELEASE LEVEL is always going to be 0 (that is why the parameter is not included).

In a Piano (and all percussion family instruments) the DECAY 2 LEVEL is 0, you can delay the eventual return to level = 0 with the sustain pedal or by holding the key… but it will stop vibrating eventually. Of course, if you do release the KEY at any time in the envelope, the sound proceeds directly to the RELEASE TIME>

RELEASE TIME on a piano is not immediate – you don’t often appreciate it until it is wrong. But there is a definite slope to how the sound disappears – if you set the RELEASE TIME so that is too fast, it is just not comfortable. If you set it so it is too slow, it is not right either. Experiment with a PIANO Voices Release time and you’ll hear immediately what I mean.

STRING sounds: Strings that are played with a bow fall into a family of instruments that are considered “self-oscillating” as opposed to “percussive”. Instruments that are bowed or blown fall into this ‘self-oscillating’ category. Here’s what this means:

The sound vibration is initiated by bowing (applying pressure puts string in motion) or blowing (pressure puts a column of air in motion) and will continue as long as the pressure is maintained by the performer. This can be indefinitely. The bow can change direction and continue the vibration indefinitely… the blown instrument performer can circular breathe and continue the vibration indefinitely.

BRASS sounds: Brass sounds are typically programmed, like strings, to sustain (continue) as long as the key is held.

The AEG in these types of instruments will very rarely have DECAY 2 LEVEL at 0… because they need typically to continue to sound as long as the key is engaged (the key being engaged is how you apply the “pressure”). This does not mean you couldn’t setup a String Orchestra sound that ‘behaved” like a percussion instrument… it is just that most strings sounds are programmed to be “bowed” (continue the vibration) along with how/when you hold and/or release the key.

If you engage a sustain pedal on a sound that DECAY 2 LEVEL is NOT equal to 0 – the sound will be maintained (forever) at the DECAY 2 LEVEL.

If you engage a sustain pedal on a sound before it reaches output level of 0, and the DECAY 2 LEVEL is not 0, the sound will be latched at the level it has reached thus far

Remember an envelope develops over time. Therefore, if you lift the sustain pedal, (KEY-OFF) the sound jumps immediately to the RELEASE TIME portion of the envelope… If you re-engage the pedal before the sound reaches LEVEL = 0, the envelope will be latched at the level it has reached.

PITCH and FILTER ENVELOPES

Understanding how the envelope Time and Level parameters function is a key to emulating programming. Getting the Attack and the Release correct, go a long way to helping the result sound like something emulative. As you’ll soon hear this same waveform is used to create Synth Strings, Synth Brass and a Synth Lead VOICE.

Please use the Editor to explore the PEG, FEG, and AEG to gain an understanding of how they shape the same source waveform into very different sounds. Don’t be afraid to try different values – use the graphic to let your eyes support what your ears are hearing. A gradual Attack should look like an uphill ramp, while a quick Attack should look like a steep climb, and so on.

To make the String and Brass tones sound like a section of strings and brass, I copied Element 1 into Element 2, and used the Detune (Fine) to create a sense of an ensemble. We want you to begin to use the Editor to visually explore the VOICE and the settings. Please experiment with changing the TIME and LEVEL parameters of the various ENVELOPES. Let’s quickly take a look at the Filter movement (the Filter Envelope Generators are responsible for harmonic movement within a Voice). In the VOICE provided in the download data: USER 05: “Straight” let’s take a look at the FEG:

In the screenshot above I have silenced (temporarily) Elements 2 and 3 – to concentrate and hear better what I’m doing. You do so by unchecking the box in the column labeled “USE”

I’ve clicked on the FILTER tab and I’ve clicked on the FEG button to open the pop up window that shows the details of what is happening with the Filter assigned to Element 1. Notice the unique shape of the envelope: A sharp rise at the ATTACK TIME (0 is almost immediate) means the filter is flipped open at the beginning of the sound (LEVEL = +112), then at a rate of 60 it closes a bit to a LEVEL = +67… as you continue to hold the key down (either physically or with a sustain pedal) the filter continues to close to +21 at a speed of 67… then returns to neutral at a speed of 65 when the key is released. The FEG DEPTH (lower left corner of the Filter Envelope screen) is very important because it determines how deeply this filter movement is applied. If FEG DEPTH = +0, as you may have guessed, NOTHING HAPPENS… no DEPTH means no filter movement will be applied. The higher the number value the deeper the application of the Envelope.

In the screen shot below I have included the AEG (amplitude change) that is happening to Element 1 at this same time. In order to view this, I selected the “AMP” tab and then pressed the AEG button for Element 1. Of significance is that AMPLITUDE means loudness – if the AMPLITUDE ENVELOPE shuts the sound down, then no matter what FILTER changes you have set, they will not be heard. In other words, TIME is a critical factor. If you setup a FILTER movement (Envelope) that requires more time than the AMPLITUDE envelope allows, well, you simply will not hear it. Make sense? Opening or closing the FILTER when there is no AMPLITUDE to support it, means it takes place but like the tree in the empty forest – no one can hear it!

Okay, those are the logistics. Please not that this filter movement gives this sound a unique harmonic shape which should make sense to you as you seen the FEG graphic. You can, with the Melas Voice Editor, either work with the numbers or you can edit the graph itself (position the mouse exactly over the dot which represents the parameter in question and drag it to a new location. The number values will change accordingly).

In future articles in this series, we will build upon these basic sounds.

DOWNLOAD THESE EXAMPLES:

The download is a ZIPPED file containing a VOICE EDITOR File (.VOI) – which requires you have the John Melas MX Voice Editor and a working USB connection to your computer in order to use this data.

If you do not have the John Melas Voice Editor and wish to load these Voices – there is also an MX ALL data file (.X5A) that will Load these four Voices into the first four USER locations in your MX.

IMPORTANT WARNING: please make a Backup ALL data FILE (.X5A) of your current MX data so that you can restore it after working with this tutorial.

The Example VOICES:

USER 01: LD: Init Saw – Basic initialized Voice with the raw Waveform placed in Element 1

USER 02: ST: SawStrings – With minor adjustments to the tone (Filter) and volume (Amplitude) we have fashioned strings- uses two Elements detuned

USER 03: BR: SawBrass – With a few more minor adjustments we have fashioned a brass ensemble – again, uses two Elements detuned

USER 04: LD: Soft RnB – Basic soft sawtooth lead

USER 05: CMP: Straight – Synthy, brassy, 1980’s through “1999” type sound

Files:

Please find at the bottom of the page, the ZIPPED DOWNLOAD containing the data discussed in three different formats for convenience. (It is the same data in all three files).

MXsawtoothStudy.VOI – John Melas MX Voice Editor File (all other data in the file, excepting the 5 USER bank Voices for this tutorial are INITIALIZED Voices)

MX_SawtoothVoices.ALLmx – John Melas MX Total Librarian File (all other data in the file, excepting the 5 USER bank Voices for this tutorial, are the Factory Set)

MXsaw.X5A – Yamaha MX49/MX61 ALL data Library File (all other data in the file, excepting the 5 USER bank Voices for this tutorial, are the Factory Set)

_ Loading the .X5A MX Library File: Unzip the data and transfer just the file to a USB stick you use to Save/Load data to your MX49/61. The USER Voices are found by CATEGORY:

LD = Synth Lead;

ST = Strings;

BR = Brass;

CMP = Synth Comp

(User Voices are always added to end of the List in the indicated Category).

Use [SHIFT] + [CATEGORY] to advance quickly through Categories/SubCategories.

_ Using the Melas File formats: Unzip the data and transfer the file to a folder on your computer desktop. Launch the Melas Editor and open the File with the Editor.

Keep Reading

© 2025 Yamaha Corporation of America and Yamaha Corporation. All rights reserved. Terms of Use | Privacy Policy | Contact Us